Generative UI: the new front end of the internet?

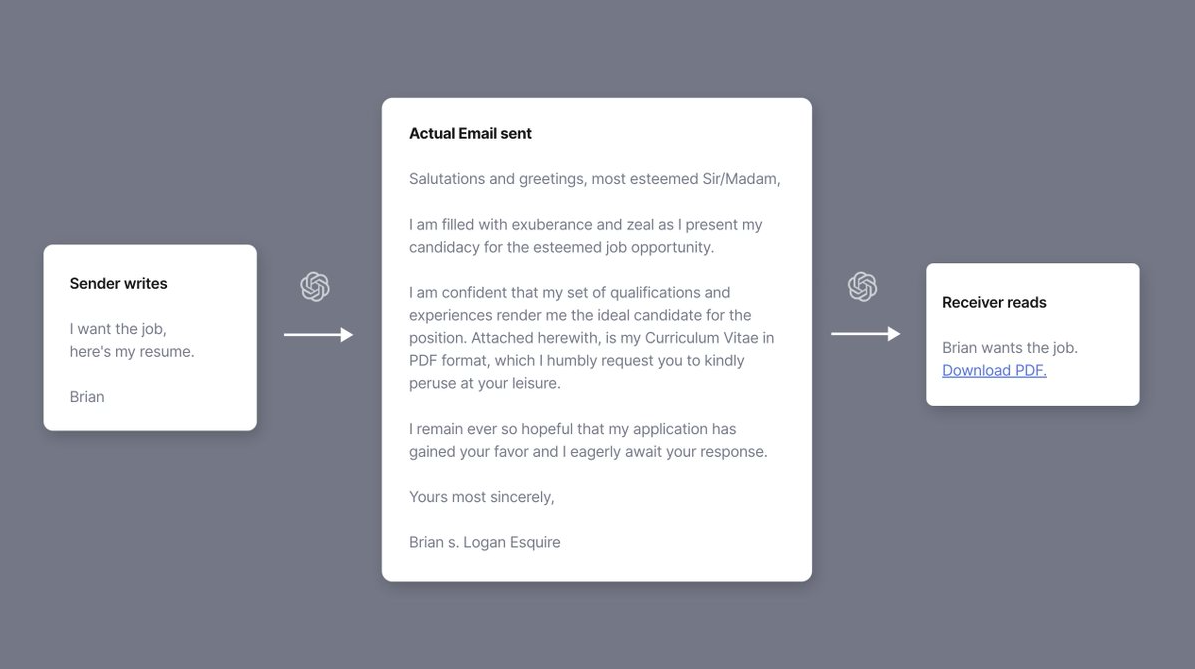

This image posted on Twitter is a great summary of how AI is about to change our lives:

It’s a fun idea, that people might not have taken seriously.

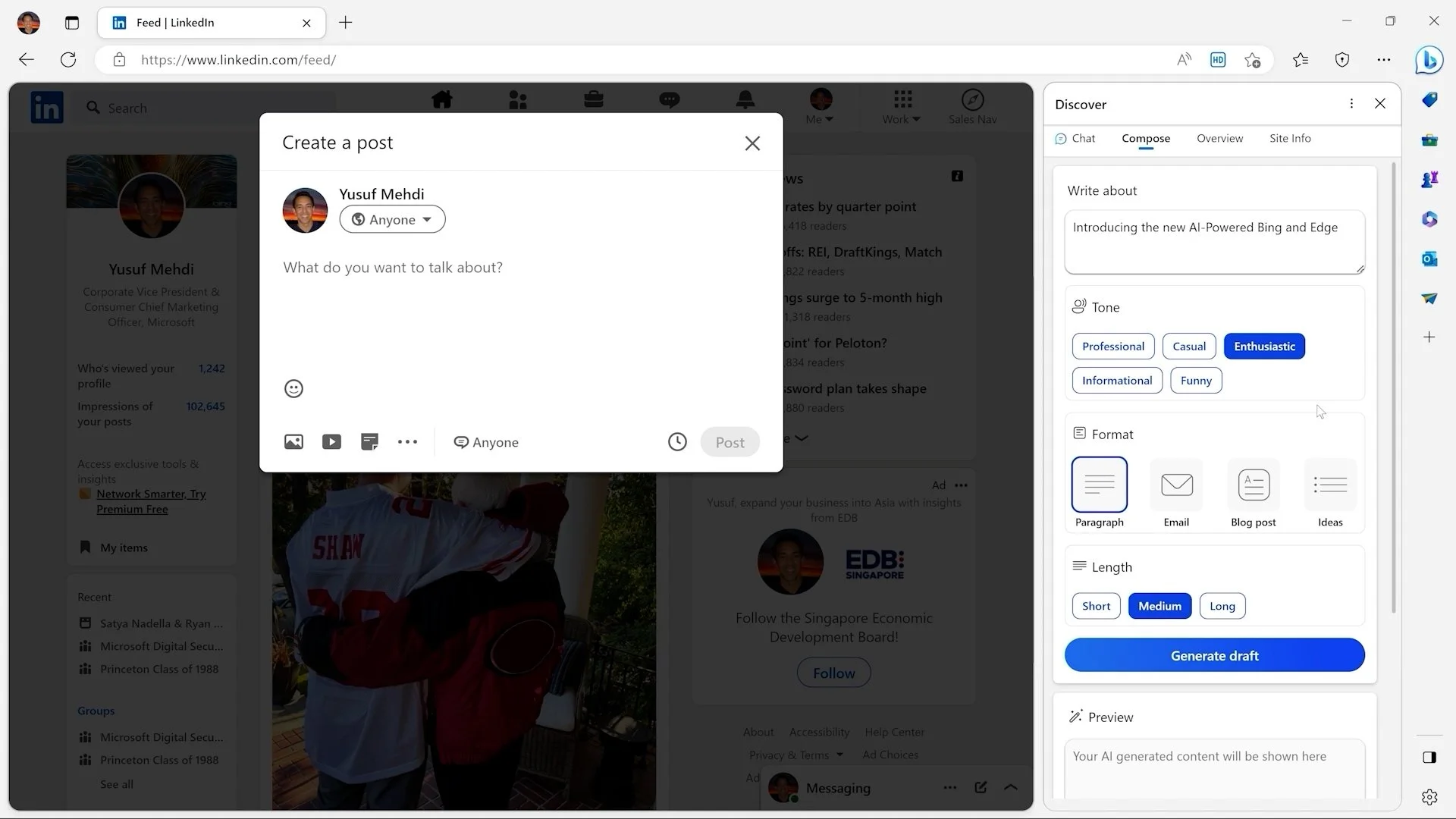

And then, a few weeks later, Microsoft demoed this exact feature as a centrepiece in its multi-billion-dollar investment in disrupting how we all use the internet.

The new sidebar in the Edge browser can be used to compose a message (in this case, a LinkedIn post) and summarise a page (presumably made up of everyone else’s AI-generated messages).

I’ve had this sidebar stuck in my head for the last few days — alongside two thoughts.

Will that sidebar, over time, become the main screen?

What does this mean for the future of interaction and ‘product’?

Web design has been trending towards a singularity for the last 30 years.

In the beginning, the web was chaotic and idiosyncratic. It was Space Jam, and Geocities.

Over time, the web got more professional, more organised, and more simple. We’ve settled on design conventions, and everything has become very samey.

AI may be about to transform this from a design trend, to a platform shift. Because with AI, it is increasingly feasible to layer a completely separate user experience over almost everything.

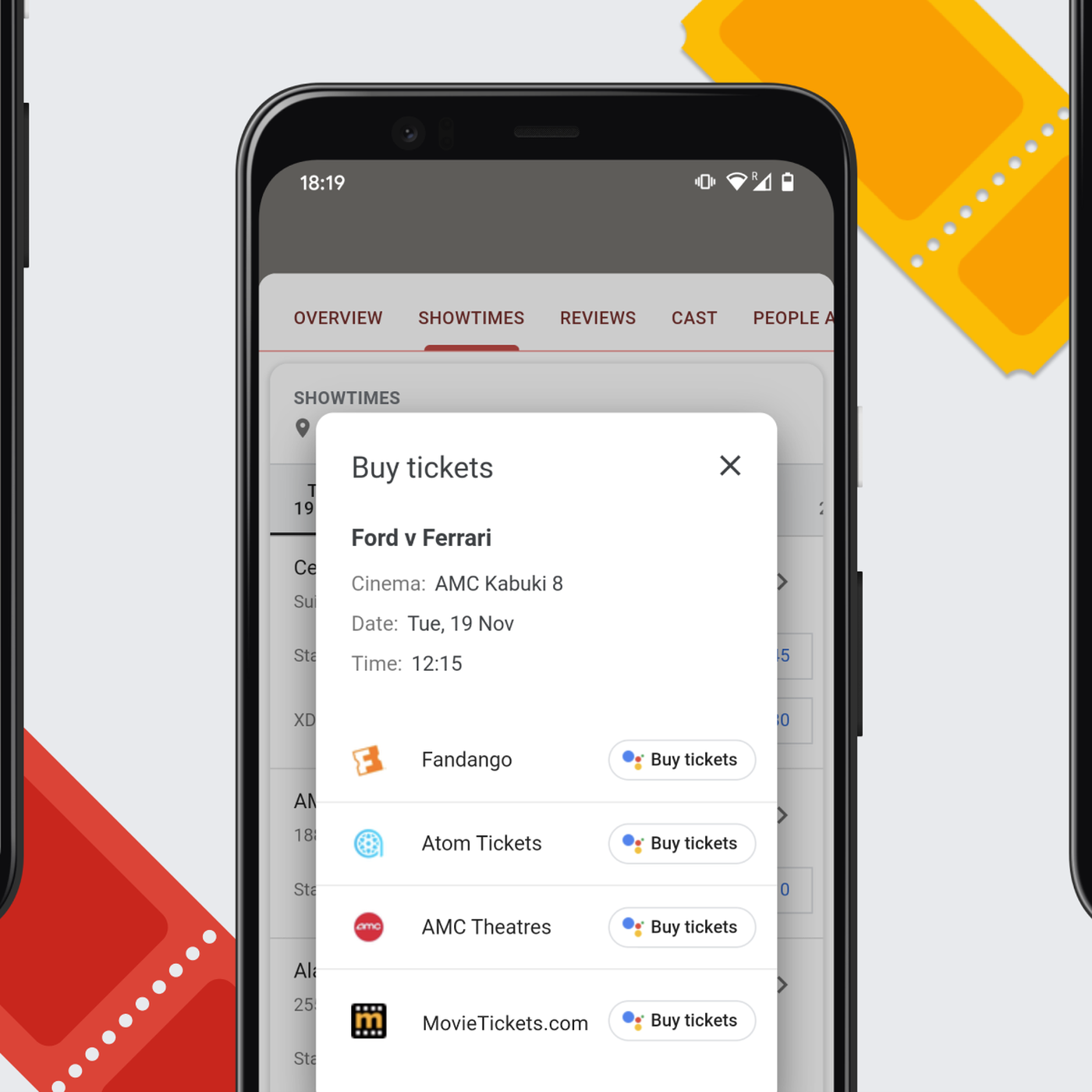

This isn’t a new concept. Google Duplex has been doing this for a few years. It can book cinema tickets on your behalf, by completing the task of navigating through the (often annoying) ticket purchase journey.

The new Bing Chat is on a similar path.

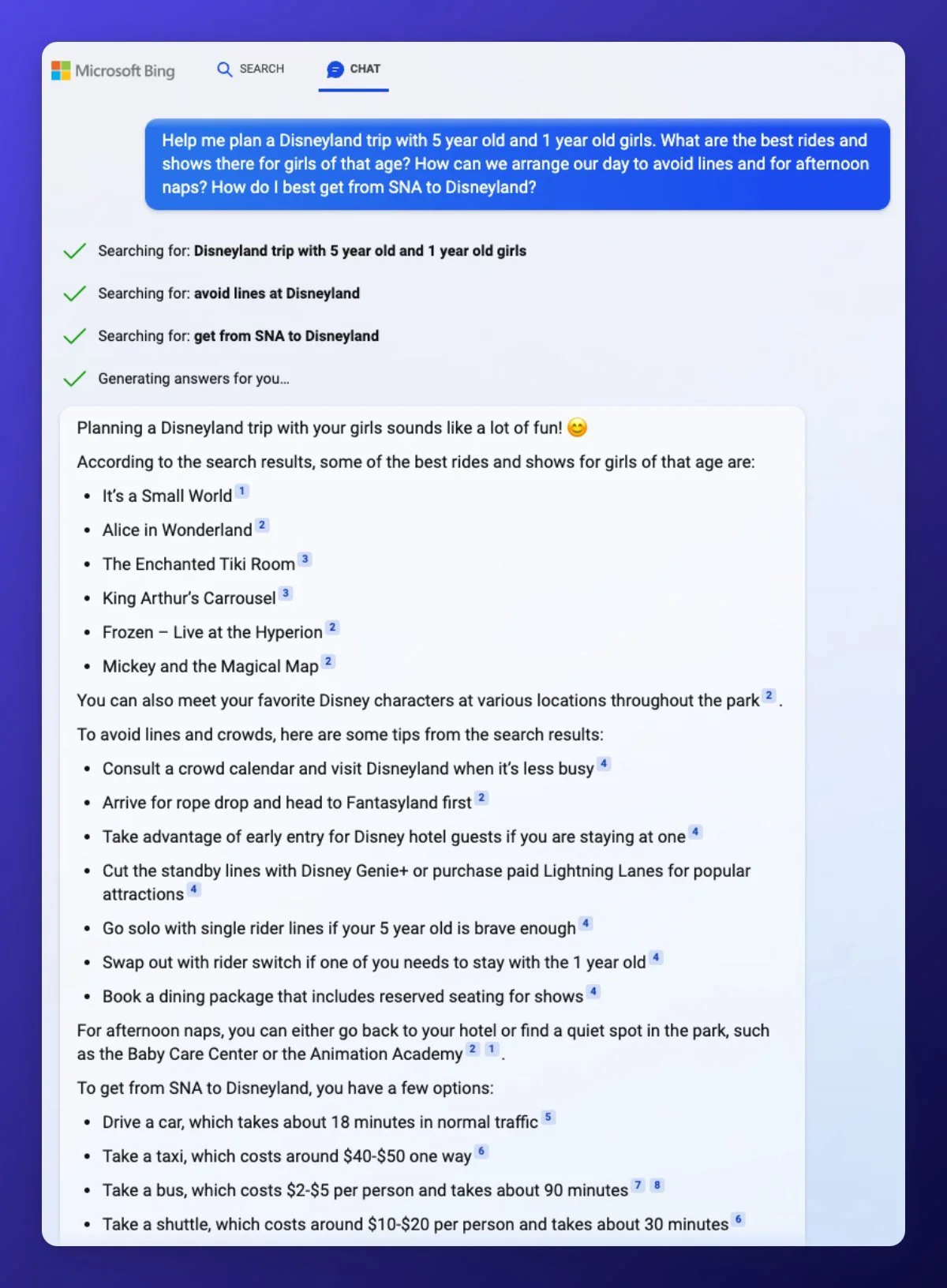

Put these ideas together, and you start to wonder whether the underlying websites are needed at all. Bing + Chat searches the web and then constructs a response and references the sources.

In many ways, Bing would be better off having access to a structured set of raw/live data about Disneyland, and some functions (e.g. to book tickets) with which it could construct the response and take actions. For Disney itself, this would be great — it could publish content and functions specifically to be used by AI platforms to deliver personalised experiences that drive sales.

The same idea could apply to journalism, online shopping, social media, streaming video, or data visualisation.

Let’s take a Premier League football match. The current paradigm is well established: live TV broadcast, edited highlights on YouTube, match stats tracked by Opta, match reports by journalists. This works pretty well, but it is a completely flat and generic production cycle.

With AI, personalisation could go much further. AI systems could ‘watch’ the match, and construct personalised highlight edits on request. I should be able to type “Show me every touch that Haaland had” or ask “How did Casemiro play?” and get a natural language response pulling together statistics, video clips, and insights from my favourite journalists.

As a user, there’s no benefit to having these products in different apps. I’d much rather do this from one clean, central interface — which might look a lot like Bing Chat.

Front ends, on demand

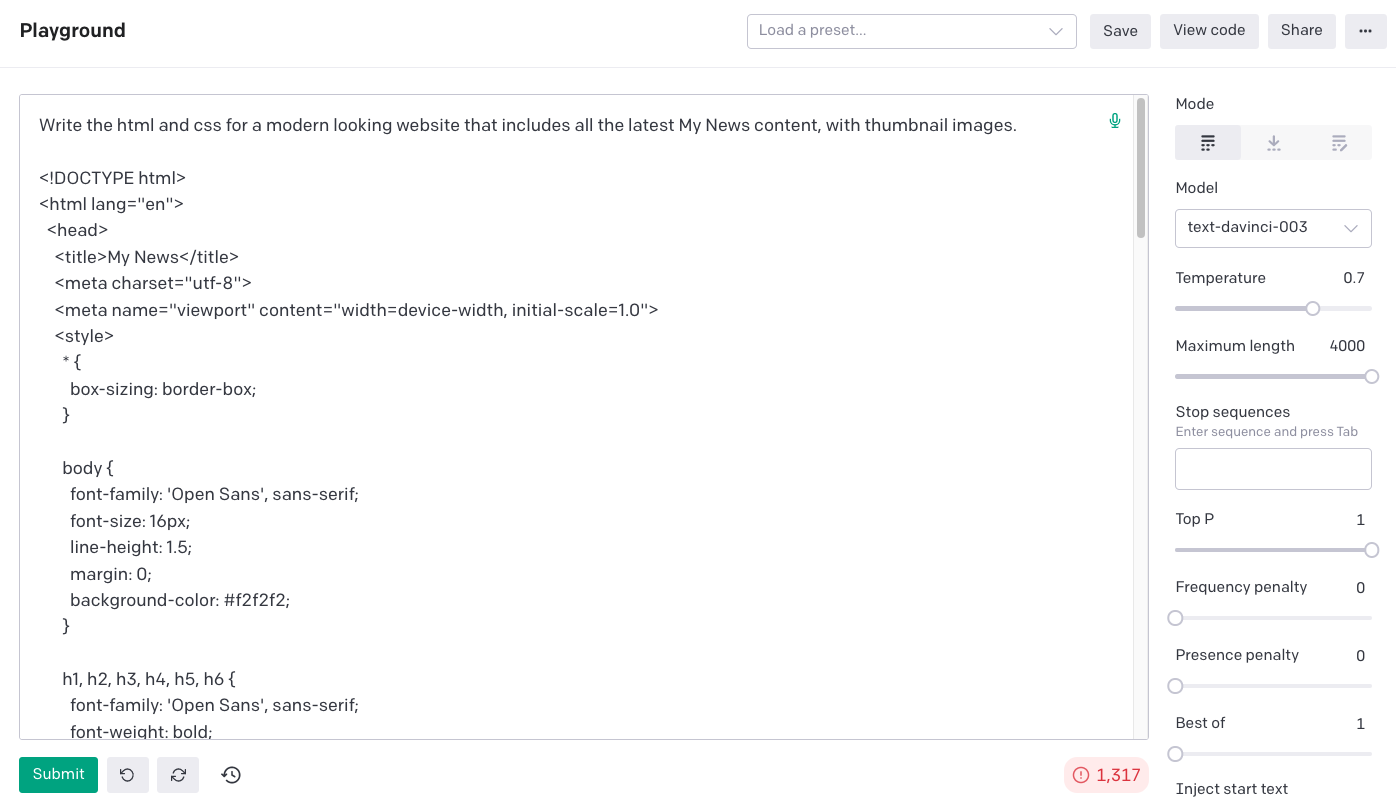

AI is capable of this level of disruption in part because it can generate many of the elements that made up the old ‘front end’ of the web — content, images, and code.

If I go to GPT-3 and say: “Write the html and css for a modern looking website that includes all the latest My News content, with thumbnail images.”

We have also got used to the idea that a product is defined by its presentation paradigm:

- Twitter is a stack of text ‘cards’

- YouTube is longform landscape videos

- Instagram is square images

- TikTok is short videos in portrait mode

- Netflix is thumbnail carousels and full-screen viewing

In an AI-native future, all of this rigidity seems to fade away. When browsing a data chart, the user could ask for an extra feature to be added and the system could both understand the request and immediately generate it.

Like Tom Cruise in Minority Report, we’ll have a very fluid, flexible and organic way to interact with technology — just without the magic gloves.

You can make a decent start at this already by describing the UI you want to see to an image generation model. I asked Midjourney for a design for a personalised homepage and got these:

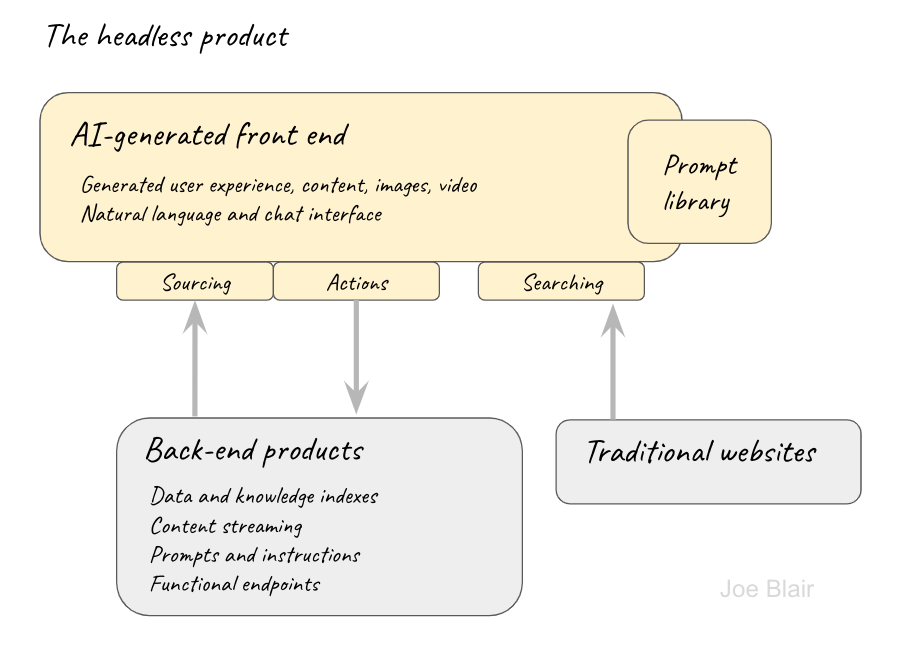

The headless product

All of this points to a new stack for product development. We’ve got used to separating the front end from the back end, but we’re looking at a future where the front end might not be needed at all, and the ‘back end’ might be built specifically to serve the functions of an AI platform.

The age of the headless product.